Journal of Shanghai University >

Two-dimensional material band gap prediction based on machine learning method

Received date: 2018-09-12

Online published: 2018-09-21

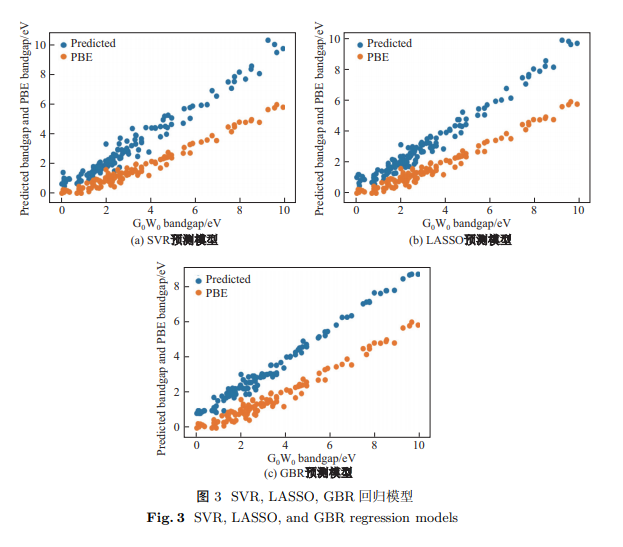

Machine learning (ML) algorithm and the traditional density functional theory (DFT) are combined to study the band gap of two-dimensional metal compounds, and as a result, a simple and effective model which is more cost-effective than the traditional quantum calculation method is established. Results of general gradient approximation-Perdew-Burke-Ernzerhof (GGA-PBE) and G0W0 are taken as reference and a two-dimensional material data set with chemical formula MX2 is investigated. Least absolute shrinkage and selection operator (LASSO), support vector machine regression (SVR) and gradient boosting regressor (GBR) and other machine learning methods are used to build a band gap prediction model. Among these models, it is found that the SVR model based on linear kernel function and LASSO model both can give a good prediction result, the mean absolute error (MAE) of training model is 0.34 eV and MAE of testing set is 0.5 eV. Thus, for the prediction of two-dimensional material band gap, the feature parameter set adopted by us has a certain completeness and rationality, which has a certain reference value for the preliminary prediction for the band gap of new materials.

Key words: two-dimensional materials; first principles; machine learning; band gap

YOU Yang, DU Wan, LI Weiju, CHEN Jingzhe . Two-dimensional material band gap prediction based on machine learning method[J]. Journal of Shanghai University, 2020 , 26(5) : 824 -833 . DOI: 10.12066/j.issn.1007-2861.2089

| [1] | Chhowalla M, Shin H S, Eda G, et al. The chemistry of two-dimensional layered transition metal dichalcogenide nanosheets[J]. Nature Chemistry, 2013,5(4):263-275. |

| [2] | Miro P, Audiffred M, Heine T. An atlas of two-dimensional materials[J]. Chemical Society Reviews, 2014,43(18):6537-6554. |

| [3] | Liu G B, Xiao D, Yao Y, et al. Electronic structures and theoretical modelling of two-dimensional group-VIB transition metal dichalcogenides[J]. Chemical Society Reviews, 2015,44(9):2643-2663. |

| [4] | Segall M, Lindan P J, Probert M A, et al. First-principles simulation: ideas, illustrations and the CASTEP code[J]. Journal of Physics: Condensed Matter, 2002,14(11):2717. |

| [5] | Bickelhaupt F M, Baerends E J. Kohn-Sham density functional theory: predicting and understanding chemistry[J]. Reviews in Computational Chemistry, 2007. DOI: 10.1002/9780470125922.ch1 |

| [6] | Fuchs F, Furthmller J, Bechstedt F, et al. Quasiparticle band structure based on a generalized Kohn-Sham scheme[J]. Physical Review B, 2007,76(11):115109. |

| [7] | Landis D D, Hummelsh J J S, Nestorov S, et al. The computational materials repository[J]. Computing in Science & Engineering, 2012,14(6):51-57. |

| [8] | Tibshirani R. Regression shrinkage and selection via the lasso[J]. Journal of the Royal Statistical Society Series B, 1996,58(1):267-288. |

| [9] | Breiman L. Better subset regression using the nonnegative garrote[J]. Technometrics, 1995,37(4):373-384. |

| [10] | Cortes C, Vapnik V. Support-vector networks[J]. Machine Learning, 1995,20(3):273-297. |

| [11] | Smola A J, Schlkopf B. A tutorial on support vector regression[J]. Statistics and Computing, 2004,14(3):199-222. |

| [12] | Ben-Hur A, Horn D, Siegelmann H T, et al. Support vector clustering[J]. Journal of Machine Learning Research, 2001,2:125-137. |

| [13] | Friedman J H. Stochastic gradient boosting[J]. Computational Statistics & Data Analysis, 2002,38(4):367-378. |

| [14] | Friedman J H. Greedy function approximation: a gradient boosting machine[J]. Annals of Statistics, 2001,29(5):1189-1232. |

| [15] | Samuel A L. Some studies in machine learning using the game of checkers[J]. IBM Journal of Research and Development, 1959,3(3):210-229. |

| [16] | Wernick M N, Yang Y, Brankov J G, et al. Machine learning in medical imaging[J]. IEEE Signal Processing Magazine, 2010,27(4):25-38. |

| [17] | Armstrong J. Illusions in regression analysis[J]. International Journal of Forecasting, 2012,28(3):689-698. |

| [18] | Hofmann T, Schlkopf B, Smola A J. Kernel methods in machine learning[J]. Annals of Statistics, 2008,36(3):1171-1220. |

| [19] | Suykens J A, De Brabanter J, Lukas L, et al. Weighted least squares support vector machines: robustness and sparse approximation[J]. Neurocomputing, 2002,48:85-105. |

| [20] | Wilson J A, Yoffe A D. The transition metal dichalcogenides discussion and interpretation of the observed optical, electrical and structural properties[J]. Advances in Physics, 1969,18(73):193-335. |

| [21] | Kohavi R. A study of cross-validation and bootstrap for accuracy estimation and model selection[C]// IJCAI. 1995: 1137-1145. |

/

| 〈 |

|

〉 |